MM #9: stolen glances

madeline wants your attention

There’s only two kinds of people in this world: the ones that entertain and the ones that observe. — Britney Spears, 2008

I saw Challengers last week and [THIS IS NOT A SPOILER UNLESS YOU’RE LIKE TRULY THE BIGGEST FREAK OF ALL TIME] there are a couple of shots in the movie where the camera occupies the tennis players’ point of view—a blur of forearm and Head logo. Then there’s an even stupider shot where the camera careens over the court as if we, the audience, were the ball (be the ball, Danny)—our passive attention a medium facilitating the electricity between Art and Patrick. I enjoyed the movie, I might even say it was good, but I’ve spent the week since struggling over something Luca Guadagnino grapples with in the film: whether greatness is still possible.

Greatness, in the film and in life, is really the result of sustained attention: demanding a commitment from both the artist and the viewer that I find increasingly hard to muster. Recently, my commitment-phobia echoed back to me somewhere in the middle distance between three screens when I saw a clip of an actor talking about a directive from Netflix executives to write scripts slow and repetitive enough that audiences could watch with double screens, staring at their phones or laptops while the show played on their tv. As my eyes darted from one screen to the other, it occurred on me that turning the tennis ball into the eyeball, or vice versa, is an apt metaphor for the way we consume media now.

But even saying “now,” is misleading, implying that this type of attention—or attention deficit—is new. Rather, as art historian Jonathan Crary has argued, this shift began in the early nineteenth century, making it ripe for reflection here. For the last 200 years, new media technologies haven’t created revolutions or breaks in the way we watch, instead they’ve simply perpetuated and intensified changes set in motion with an emerging culture of image consumption. From illustrated newspapers in the nineteenth century to the 24-hour news cycle in the twentieth, and beyond to whatever one might call the gluttonous image buffet I describe above, we have spent the era commonly referred to as modernity figuring out ways to consume more and more images as quickly as possible. In Suspensions of Perception (MIT Press, 1999), Crary quotes from Max Nordau’s polemic against modern art and technology and their deleterious effects on people’s bodies, Degeneration (1892). Nordau predicted that at the close of the twentieth century there would be a “‘generation to whom it will not be injurious to read a dozen square yards of newspapers daily, to be constantly called to the telephone, to be thinking simultaneously of the five continents of the world…[and] know how to find its ease in the midst of a city inhabited by millions.’”1 To which Crary responds, “What he and others failed to grasp then was that modernization was not a one-time set of changes but an ongoing and perpetually modulating process that would never pause for individual subjectivity to accommodate and ‘catch up’ with it.”2 While it is true that the public at the end of the twentieth century consumed media at what Nordau would have considered a monstrous pace, they did not reach a place of stasis. Rather, media technologies continued to change and accelerate, continually preventing people from “finding their ease.”

In January of 1984, Apple debuted their Macintosh computer, designed as a challenge to IBM, bringing personal computing from the corporate world into everyday life. Two days before the product launch, Apple aired their famous “1984” commercial during the Superbowl. Alluding to George Orwell’s dystopian novel, 1984 (1949), the ad’s director, filmmaker Ridley Scott, presented audiences with a gray world inhabited by inexpressive drones, resembling the black and white picture of old television sets. A simultaneous broadcast can be heard and seen on screens throughout the dismal scene, the voice of a villainous leader echoing: “we are one people, one will, one resolve, one cause.” Suddenly, a blonde woman in living color—her shorts are bright red—breaks through, evidently eluding the riot police pursuing her, and hurls her hammer at Big Brother’s bespectacled face. After a lingering shot of the dumbstruck audience, a voiceover reads the famous tagline: “On January 24th, Apple Computer will introduce Macintosh. And you’ll see why 1984 won’t be like 1984.” Apple had announced itself as the tool with which to set oneself free.

At the same time, Macintosh aired an ad in France showing nineteenth-century bureaucratic drudges sitting in tidy rows, completely uniform and unindividuated from one another, performing operations on telegraph machines. A voice over tells us that in 1876, thousands had devoted themselves to the telegraph when a message came through that Alexander Graham Bell had invented the telephone—to the shock and horror of the office manager. A parallel event, the voice over informs us, happens in 1984. A sterile white office filled with uniform rows of secretaries clacking away on IBM computers is interrupted when a message comes through the dot matrix printer and fax machine: “Apple has invented the Macintosh.” The commercial ends with the slogan: “No longer learn to become a machine. Apple has invented the Macintosh.” For the director of the French Macintosh ad, the technological revolution at the end of the twentieth century was not revolutionary at all. Crary expresses a similar sentiment, writing, “this more developed form of sedentarization, of cellular space mapped out on a global scale, is less the consequence of new technologies and inventions, than the banal legacy of the nineteenth century, and the dream fabricated then of the complete bureaucratization of society.”3

Though the two commercials couldn’t be more aesthetically different, one science fiction, the other historical fiction, it is not a coincidence that, as a pair, they link the nineteenth century to screen technology’s uprooting, mobilization, and multiplication. These twin Macintosh commercials encapsulate the argument made over the course of Crary’s career: from the realization in the 1980s of the nineteenth century desire for disciplined and uniform workers and an unimpeded flow of information across the globe to the dissolution of television’s centralized authority into mobile screens targeting individuals.

Writing in 1984, Crary identifies constant motion and acceleration as the defining features of modernity’s mediums. In the essay “Eclipse of the Spectacle,” Crary writes that “the TV screen and the car windshield reconciled visual experience with the velocities and discontinuities of the marketplace.”4 Here, Crary anticipates our present mobile screen culture, demonstrating the accelerating sophistication of these technologies between the 1880s and the 1980s. In Crary’s ouevre, television realizes nineteenth-century fantasies about complete attention and control. Crary writes:

For the last hundred years perceptual modalities have been, and continue to be, in a state of perpetual transformation or…crisis. If visual experience can be said to have any enduring characteristic within twentieth-century modernity, it is that it has no enduring features; rather, it is embedded in a rhythm of adaptability to new technological relations, social configurations, and economic imperatives.5

Crary provides a definition of modernization that divorces the concept from the false narrative of progress, for Crary modernity is defined by the continual production of the new that allows things to stay the same. Television is a technology that both exemplifies and has been rendered obsolete by the “destabilizing process of modernization…a self-perpetuating, directionless creation of new needs and desires.”6 In 24/7 (Verso, 2013), he will write about the constant production of new technologies developed to create an unending stream of desire, so enraptured are we that there is no room for reflection. Without space to think, political dissent or even refusal to participate in capitalist modes of consumption become impossible. The realm of sleep, Crary argues, is the last vestige of everyday life, a holdout that is ever more encroached upon by attention-seeking technologies.

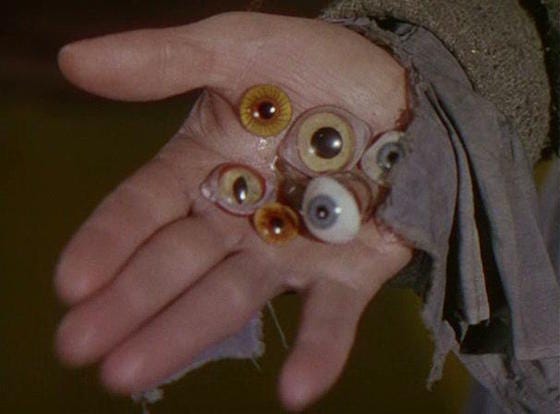

In 2017, Reed Hastings, a Netflix executive, identified sleep as the company’s biggest competitor, ominously adding “and we’re winning.” As early as 1816, the German author E.T.A. Hoffmann published “Der Sandmann,” conceiving the eyeball as modernity’s organ and sleep as its refuge. In Hoffmann’s short horror story, a young man named Nathanael is sent into a state of psychological turmoil after an itinerant glass eye salesman triggers his childhood fear of the Sandman—a monstrous figure who visited his father each evening just as the children were sent to bed. When Nathanael inquires about his father’s nightly guest, a nursemaid tells him that “he’s a wicked man who comes to children when they don’t want to go to bed and throws handfuls of sand in their eyes; that makes their eyes fill with blood and jump out of their heads, and he throws their eyes in a bag and takes them into the crescent moon to feed his own children.”7 This underlying terror shapes the events that follow, as Nathanael’s vision, his eye, is absorbed into a network of new—modern—relationships with people, machines, and his own subjectivity. Later in the story, Nathanael uses an eyeglass, a technological apparatus, to extend his field of vision with the hopes of making meaningful contact with his beloved Olympia. But Olympia herself is merely a machine, an automaton constructed by a clever professor, and is thus unable to return his gaze. The eyeglass and the automaton, combined with a heady dose of Nathanael’s psychological subjectivity, illustrate the uneasy place of his individual perception in a modernizing world mediated by new technologies.

Nathanael’s primal terror, an uneasiness in the triangular relationship between his subjectivity, objectivity, and eye, is usually explained in Freudian terms. In fact, Hoffmann’s tale would become Sigmund Freud’s emblematic example of the uncanny, creating a psycho-analytical model for interpreting horror stories that would remain with film and media studies well into the 1980s. Freud diagnosed Hoffman’s protagonist with a classic castration complex, placing the source of his angst within his own mind. In his 1982 essay, “Psychopathways: Horror Movies and the Technology of Everyday Life,” Crary rejects the lingering influence of this kind of Freudian analysis of horror. He writes, “How often we still hear the same restricted vocabulary to pinpoint the source of horror: the unconscious, the dark side, the return of the repressed, and so on.”8 Crary believes that “horror is no longer a dehistoricized experience of the human psyche but rather the manifold product of a specific technological and social landscape.”9 In Crary’s interpretation, in the case of this essay, of horror films including Psycho (dir. Alfred Hitchcock, 1960) and Videodrome (dir. David Cronenberg, 1983), horror is rooted in the destabilizing social relationship with technologies rather than in repressed childhood trauma. As in Hoffmann’s story, in Crary’s post-modern society, lack of sleep makes the eye vulnerable to attack. Sleep stands as the last refuge from a world mediated by screens whose main currency is “eyeballs,” the metric of engagement utilized by big tech companies and streaming services.

Elsewhere, Crary discusses Baudrillard’s conception of television as a medium of implosion between the viewer and the viewed in which the eye and the apparatus become a “perfect circuit.” This circuit powers accelerating digital media culture as, over time, the “eyeball” becomes the currency that large tech companies traffic in. Google, Facebook, and Tiktok are all competing for their market share of our attention. And as the eye fuels those corporations, it also acts on our physical bodies which are locked in to this circuit: “The eye is dislodged from the realm of optics and made into an intermediary element of a circuit whose end result is always a motor response to the body of electronic solicitation.”10 In this “perfect circuit” there is no differentiation between the observer and that which they observe, no individual.

It is no coincidence that television is often referred to colloquially as “the narcotic of the masses.” In “The Gadget Lover: Narcissus as Narcosis,” media theorist and philosopher Marshall McLuhan (1911-1980) reflects on how changing media technology reconfigures desire. He tells the story of Narcissus, restaging the usual interpretation of the love relationship Narcissus enters with himself as a form of “autoamputation.” Rather than reading Narcissus’s reflection as an extension of himself, McLuhan suggests that it instead has a narcotic effect (according to McLuhan, in Greek narcotic means numbness), numbing being an act of self-preservation for an over-stimulated nervous system. McLuhan explains that “the principle of self-amputation as an immediate relief of strain on the central nervous system applies very readily to the origin of the media of communication from speech to computer.”11 Narcissus and his reflection, then, are prototypical of the circuit formed by the self, the screen, and the eye with the screen acting as both an extension and amputation of the self. McLuhan writes:

With the arrival of electric technology, man extended, or set outside himself, a live model of the central nervous system itself, must be numbed. To the degree that this is so, it is a development that suggests a desperate and suicidal autoamputation, as if the central nervous system could no longer depend on the physical organs to be protective buffers against the slings and arrows of outrageous mechanism.12

Screens are both the problem and the balm here, though the auto-immune response of our attachment to them is, in McLuhan’s eyes, borderline suicidal. In this passage, McLuhan makes a surprising reference to Shakespeare’s Hamlet—in the famous “to be or not to be?” soliloquy, Hamlet contemplates suicide and death, questioning “whether ‘tis nobler in the mind to suffer/ The slings and arrows of outrageous fortune/ Or to take arms against a sea of troubles/ And by opposing end them? To die: to sleep.” Like Crary, McLuhan seems to be suggesting that sleep—or death—are the only spaces of resistance that remain.

For the record, perhaps as a result of sleeping enough the last few nights, I don’t crave death rn. As of last week, I’m on a three month summer vacation and, in spite of the borderline hysteria about disassociation and suspension expressed above, I am feeling very sensual and alive to the world and full of love and grateful for it, feelings cheapened by sharing them in a newsletter but nonetheless real. I do think these discussions often lack something—hope, I guess. The best I can offer is another Hamlet quote, one that expresses the ineffable boundlessness of self and the unfortunate limiting reality of the world the self inhabits:

O God, I could be bounded in a nutshell, and count myself a king of infinite space—were it not that I have bad dreams.

Anyway, please invite me to your beach house this summer. I’m actually really fun, everybody says so. If you’re not feeling me, do the next best thing and spend the summer with Mandylion by getting your books at mandylionpress.com.

As always,

Madeline <3

Jonathan Crary, Suspensions of Perception (Cambridge, MA: MIT Press, 1999), 30.

Ibid.

Crary, Tricks of the Light (New York: Zone Books, 2023), 105.

Ibid, 104.

Ibid, 148.

Ibid.

E.T.A Hoffmann, “Der Sandmann” in The Golden Pot and Other Tales (Oxford: Oxford University Press, 1992), 87.

Crary, Tricks of the Light, 81.

Ibid, 95.

Jonathan Crary, 24/7 (New York: Verso Books, 2013), 76.

Marshall McLuhan, “The Gadget Lover: Narcissus as Narcosis” in Understanding Media: The Extensions of Man (Cambridge, MA: MIT Press, 1994), 43.

Ibid.